Url extractor chrome source code8/18/2023

You can find more information regarding conditionals in Use conditionals.

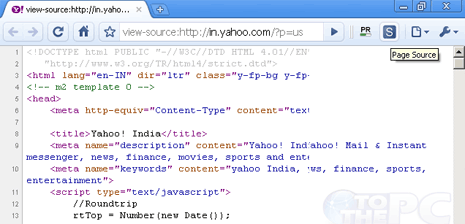

The conditional allows you to implement different functionality for the cases of successful and unsuccessful data extraction. To determine whether the data extraction is successful, use an If conditional to check if the WebPageProperty variable is empty or not. To find more information about action error handling, refer to Handle errors in desktop flows. If you're unsure if an attribute exists on a web page, configure the On error options of the Get details of web page action to continue running the flow after failure. For example, web pages without meta keywords are a common occurrence. The retrieved information is stored for later use in a text variable named WebPageProperty.Īlthough most properties exist virtually on every web page, there are scenarios in which the Get details of web page action fails to retrieve the selected detail. The Get details of web page action offers six different options: A browser instance can be created with any browser-launching action.Īfter selecting the appropriate browser instance, choose the information you want to extract from the web page. To use the action, you need an already created browser instance that specifies the web page you want to extract details from. The Get details of web page action allows you to retrieve various details from web pages and handle them in your desktop flows. Once ready, the tool begins scraping the site data.Extracting information regarding web pages is an essential function in most web-related flows. Select one that suits your needs, input the domain or URL, and use the “Get all links” button. The URL option, however, is more suitable if you primarily need data for a specific page, including all its references and associated data, such as status codes. Choosing the domain option is beneficial if you want to extract all links from a website and identify any existing link issues. There are two methods to extract links from website, namely by domain or by search on a specific page. It provides a wealth of data, ranging from status codes to anchor text and nofollow statuses. The URL unpacker is highly beneficial, as it enables you to analyze a bulk of URLs rather than checking them individually. It’s also handy for testing newly published landing pages for broken or incorrect references. The website all URL finder feature can find all links on a website and detect various link issues, while explaining how to resolve them. For instance, evaluating the quantity of external and internal links on a webpage, verifying the status of links, or generating sitemaps. Use this multifaceted tool for various tasks. It includes a thorough SEO audit (both on-page and off-page), rank tracking, and site monitoring, along with other additional features.Ĭases When Page or Domain URL Extractor is Needed Our holistic SEO platform offers more than just link analysis.Provide continuous tracking and monitoring capabilities, ensuring 24/7 attention including backlinks tracking, and preserving a complete log of changes and errors.Detect all link-related issues, curate an exhaustive list of troublesome URLs, and provide straightforward solutions for these problems.Collect comprehensive data for every URL on your website, including internal links, internal backlinks, Internal backlinks anchors, and external outbound links.The link extractor tool serves to grab all links from a website or extract links on a specific webpage, including internal links and internal backlinks, internal backlinks anchors, and external outgoing links for every URL on the site.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed